Empowering Control with Edge Computing

In the ever-evolving landscape of content delivery networks, we understand the importance of giving you, our clients, the right tools you need to be more autonomous in your operations. That’s why we’re eager to introduce a significant advancement that puts the power squarely in your hands: CDN77 Edge Computing.

Building upon standard solutions, this platform allows you to customize online interactions according to your business and technical goals, ensuring high performance and security. We aim to empower you with complete control over how our edge servers transform, cache, and handle HTTP requests.

This blog post will explore the essence of CDN77 Edge Computing and the challenges we overcame during its development. We know that for many clients this is a game changer, so we’re launching a beta access program. You can already reach out to your account manager or sales to apply for beta access. We would love to hear your feedback or feature suggestions for your specific use case.

Let’s get into it!

🔥 CDN Infrastructure & Motivation

As we specialize in content delivery, we depend heavily on our infrastructure. We operate thousands of edge servers running across the globe. This infrastructure is supported by a 130 Tbps network with a global private backbone.

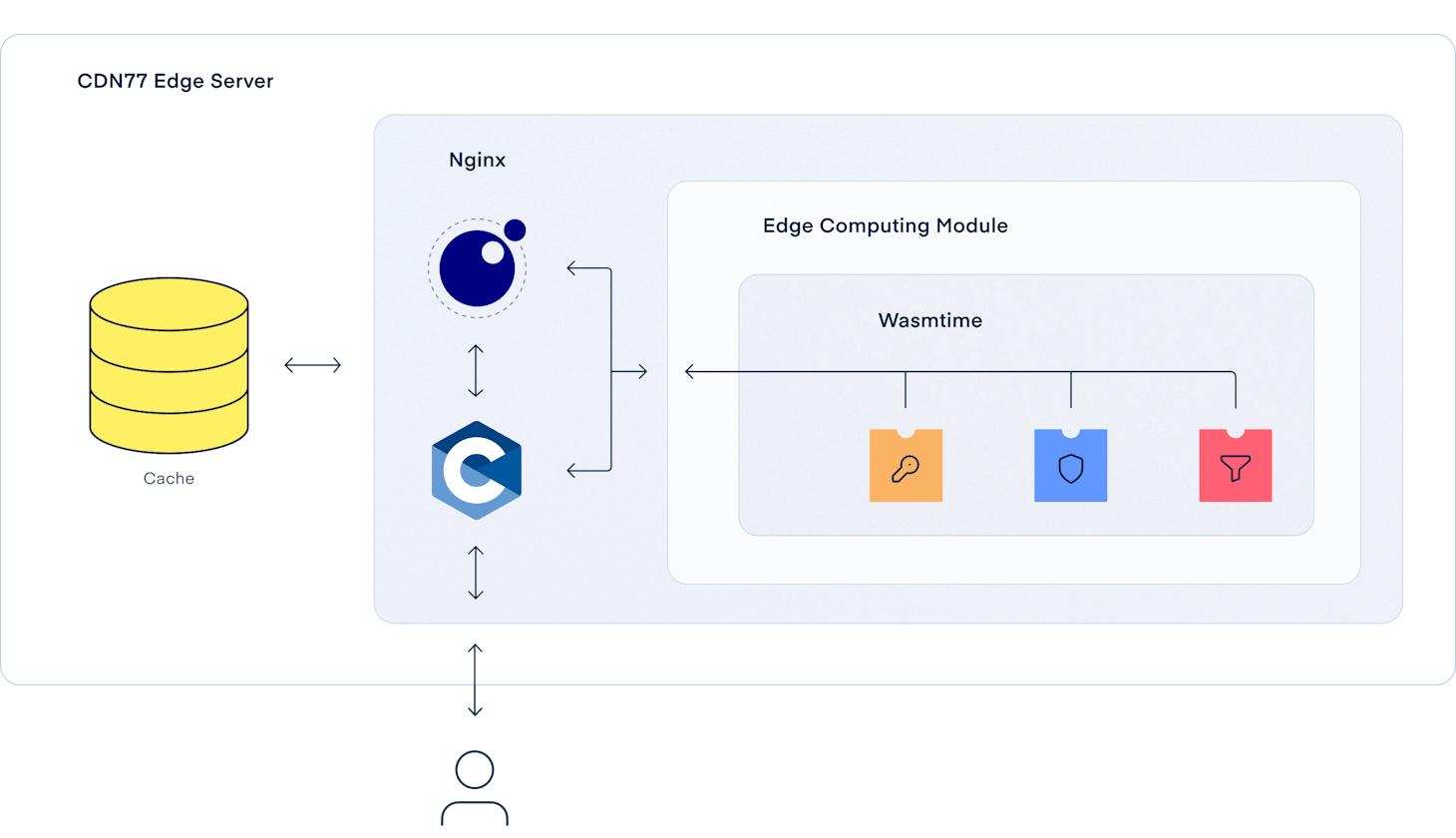

It’s no secret that our edge servers are powered by our fork of Nginx enhanced with OpenResty. This dynamic combination empowers our servers to store and deliver content efficiently. It offers advanced customization capabilities through real-time manipulation of HTTP requests and responses, ensuring optimal performance and tailored content delivery for every user.

Through our client panel or an extensive API, it’s possible to set up various features, rules and basic request manipulation. These configurations are then propagated to our edge servers and executed when needed. These configs’ technical implementation usually combines Lua scripts and Nginx configurations.

For customers who need more advanced or tailored configurations that aren’t generally available from our API, we have a Support Team that can configure almost any request or routing transformation.

While our API covers most of the common use cases of HTTP request transformation, we wanted to offer more. Our customers know what is best for them and should be allowed to manipulate their requests however they want, whenever they want, in a secure and isolated manner. Essentially, we wanted to give them full autonomy in what they can do themselves and let them see and debug routing changes instantly.

🚀 CDN77 Edge Computing

Our focus in designing the Edge Computing platform was to be able to transform requests securely and dynamically. We also needed to load new modifications and plugins dynamically on the fly without influencing or slowing down anything else running on our edge servers. Additionally, we wanted our plugins to work and seamlessly integrate with Nginx, allowing us to adjust virtually any internal behavior as needed.

Another goal was to be able to express any business logic that the client would ever need. Therefore, we want to be able to execute actual code and not be limited by basic grammar and expression. However, this decision posed a challenge: How to execute untrusted code safely without compromising the security of our servers?

🚔 Running Untrusted Code

Running untrusted code in a trusted environment is hard. Ensuring proper isolation and sandboxing mechanisms to prevent unauthorized access to system resources, like files, network, or customer data, demands meticulous attention to detail and can be complex to implement effectively. Moreover, we need to be running untrusted code fast.

While designing our platform, we considered using isolated processes, microVMs or even containers. We found isolated processes not safe enough for our use case. MicroVMs are secure but bring other challenges like long cold starts and slow communication between plugins and our Nginx. Running containers might help with the cold starts and communication overhead, but it's still light years behind our final choice.

The answer for our use case lies in the binary world - WebAssembly.

🏗️ WebAssembly

WebAssembly (Wasm) is an ideal choice for executing untrusted code on servers due to its sandboxed and efficient design. Wasm provides a low-level binary instruction format that can be executed at near-native speed, ensuring high performance for diverse workloads. The sandboxing mechanism of Wasm provides a secure execution environment that isolates untrusted code from the host system, mitigating potential security risks and preventing unauthorized access to resources.

Wasm’s platform-independent architecture enables code portability across different servers and platforms. Combining that with performance and security, Wasm ensures that untrusted code can be executed reliably and efficiently within a controlled sandbox virtually anywhere.

As a portable format, one only needs a Wasm runtime to execute Wasm plugins. After extensive research, we decided to use Wasmtime as our runtime. It is written in Rust (❤️), with SDK in C, battle-tested, and can be embedded in an already-running process.

🏞️ Running WebAssembly in Nginx

One of the many metrics used to measure the performance of a CDN is latency and response time. While building our Edge Computing platform, the main objective was to keep these metrics down and avoid increasing the general infrastructure load. To do that, we decided to run Edge Computing plugins as close as possible to the infrastructure edge, and Nginx is the first place that can understand L7 data and process it. That’s one of the reasons why we decided to embed Wasmtime directly into our Nginx. Nginx Workers processes can now load and execute Wasm plugins and utilize the full power of Wasmtime while keeping the communication overhead between the plugin and the Nginx close to zero.

Let’s explore some examples in Rust. These are the plugins or their variants we’ve been using and deploying to production for some time.

🔮 Looking Into the Future

As we journey into the future of web services and content delivery, CDN77’s new Edge Computing platform is set to evolve and expand, bringing more power and flexibility to developers and businesses alike. With a strong foundation already in place, the following features are under active development and will be available soon to the beta testers.

🗄️ Persistent Distributed Storage

Because any generic computing platform needs to be able to persist data, we’re working on distributed, eventually consistent key-value storage and distributed SQLite database. Persistent distributed storage is currently our priority number one.

🔨 SDKs, SDKs, SDKs…

We currently have a feature-complete SDK for Rust, Golang, and AssemblyScript. Our JavaScript and Typescript SDKs are still in their infancy, but we plan to open and publish them shortly. Eventually, we plan to deliver even more SDKs for Wasm-enabled languages to make it easy for developers to deploy to our platform using languages they’re familiar with.

🐦 HTTP Requests

While our Edge Computing platform is designed to handle incoming requests to our CDN, we plan to expand its capabilities to enable the dispatch of HTTP requests in the future.

🖌️ Server-Side Rendering

Given our extensive global infrastructure, we aim to provide even greater utility to our customers than is currently possible. One of the ways we're planning to do that is to provide support for server-side rendering frameworks such as Next.js, Nuxt, or SvelteKit.

🛫 Beta Program and Next Steps

We hope you share our excitement about the expansive new possibilities opened up by CDN77 Edge Computing. We've been heavily testing both internally and with selected customers for almost a year. We served billions of requests and petabytes of traffic through this platform. In fact, this very site was served to you by one of our edge computing plugins.

We'd love to hear your feedback and suggestions, so do not hesitate to contact us and tell us how you would like to utilize this platform.

Platform Architect